Currently Test Studio projects target .Net Framework 4.7.2 to 4.8.

That means our test project has to be .Net framework in order to consume the framework dlls[ArtOfTest.WebAii, ArtOfTest.WebAii.Design].

We are really looking to migrate our test project to .Net core in-order to align with our application under test (that is .Net core).

Test Studio built-in workflow for PDF validation requires the file to be downloaded and then opened in new tab.

Allow the opposite scenario where the user wants to directly open the PDF file in the browser instead.

Note: That way the opened file will not be readable from within Test Studio and only connecting to and closing the new tab will be supported.

------------------------------------------------------------

Failure Information:

~~~~~~~~~~~~~~~

ExecuteCommand failed!

InError set by the client. Client Error:

Protocol error (Input.dispatchKeyEvent): Invalid 'text' parameter

BrowserCommand (Type:'Action',Info:'NotSet',Action:'RealKeyboardAction',Target:'null',Data:'keyDown#$TS$#INVIO',ClientId:'xxx',HasFrames:'False',FramesInfo:'',TargetFrameIndex:'-1',InError:'True',Response:'Protocol error (Input.dispatchKeyEvent): Invalid 'text' parameter')

InnerException: none.

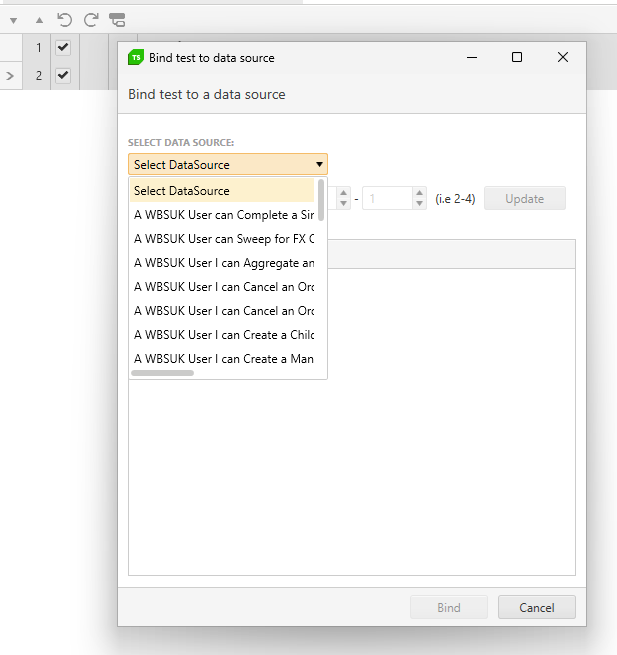

Currently, with lots of bound data sheets the visibility when binding them is poor. See

Can this be improved by widending the view and also making this a search rather than a drop down as the selection will likely grow greatly making it even harder to locate the file i need.

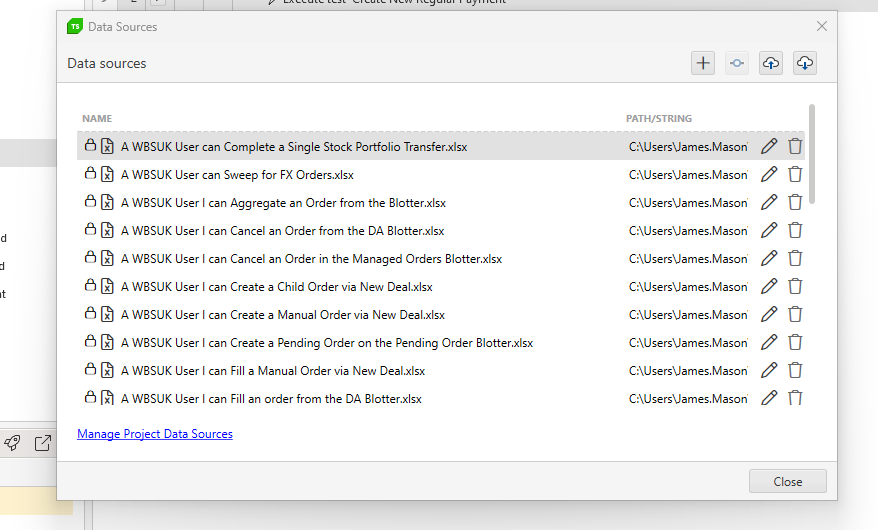

In addition, when managing the data, there should also be a search to filter the data source i want to edit / manage

Hello people at Telerik,

Is it possible to Data Bind a Radmaskedtextinput using the Test Studio User Interface?

In more detail:

I am automating a WPF application (Data Driven).

I want to fill out forms with multiple types of input fields (like Date, Comboboxes and also Radmaskedtextinput).

I have bound my test to an Excel file.

For fields like comboboxes and dates I am able to select the data to be used by clicking on the button "Data Binding" in a test step.

For me, this is "using the Test Studio Interface". (See Databind_combobox)

For "Radmaskedtextinput" type fields I am not able to do this. Clicking on the dropdown arrow at the right of a recorded test step shows nothing. (See Databind_radmaskettextinput)

Workaround:

I am able to data bind the step by converting the teststep to a coded step and changing the argument of the TypeText function. (see Databind_Code). This works, but selecting through the test Studio UI seems easier.

Thanks in advance!

With friendly regards,

Robert

Currently Test Studio Execution extension allows to get the duration only of the overall test in the OnAfterTestCompleted() method.

Extend the functionality and include the option to get the duration of each separate executed step.

Dear Telerik team,

we lately encountered an inconvenience in using ArtOfTest.WebAii.Win32.SaveAsDialog.CreateSaveAsDialog(params).

The issue occurs in the SaveAsDialog.Handle() method. In there the keyboard input uses fixed parameters for delay and hold: _desktopObject.KeyBoard.TypeText(_filePath, 50, 50, supportUnicode: true);

This slows our test unnecessarily down, so it would be desirable to either change the input parameters to a reasonably short time (Why not 0,0?) or give the developer the option to manually change the parameters, since there is no need for these delays in automated tests.

Best regards

A custom web app requires key pad authentication to log in and this uses an extension in chrome/edge.

Test Studio does not allow signing in because the chrome being opened by Telerik does not allow me enable extension.

I have tried this for edge and chrome and it doesn't work.

Explore the possible solutions for supporting such kind of apps.

Currently WPF tests always require an application to be configured and launched upon test start. So, one need to always use a dummy app when using the 'Connect to app' and 'Launch app' steps.

Enable the WPF test configuration with the option to not launch an application - similar to the configuration experience for Desktop test.

when I open TS -> Results ->

lets say it's Wednesday, a noon, and I have daily runs. I see some already executed - marked as green or red, and some yellow tiles, from the future. When I try to check what is the PC node name where a single task (list) will be executed, I don't see that info on a tile directly. Moreover, when I go and edit it, I see settings for a frequency and time, in next step I see what is the name of list selected, but on the very next step, where all nodes are listed, nothing is selected. That looks like a bug. So, if I have 20 things scheduled, I need to rely on my own notes to be sure I edit correct task. The name of the PC is available only for already executed task. No idea why it's not visible for future runs.

I need to export the contents of our test lists to a CSV, TXT or Excel file. There is no option other than exporting the generated results from a test list run.

However I need to be able to export the tests in a test list before I get to the point of executing these.

There is no option in Test Studio recording capabilities to add a step which sets value for the WPF RadSlider control. It will be useful to have such similar to the WPF slider control.

The workaround is to set this in a coded step like this:

// Accepts values from 0 to 1

Applications.SliderTestexe.MainWindow.Item0Radslider.Value = 0.25;

Currently, when running multiple test cases in Telerik Test Studio, we encounter the need to repeatedly launch and close the application under test for each individual test case. This process of launching and terminating the application adds unnecessary overhead and significantly extends the time required to execute our test suite.

To streamline our testing process and improve efficiency, we would like to suggest the implementation of an option that allows us to run multiple test cases without automatically killing the application at the end of each test case execution.

This feature would enable us to execute multiple test cases sequentially within the same application instance, eliminating the need to repeatedly launch and close the application for each test case. As a result, we would experience significant time savings and improved productivity in our testing efforts.

We believe that adding this option to Telerik Test Studio would greatly benefit your users and enhance the overall usability and efficiency of the tool.

We kindly request your consideration of this feature request, and we would greatly appreciate any updates or feedback on the feasibility of implementing this option in a future release of Telerik Test Studio.

Thank you for your attention to this matter. We look forward to your response.

Hello,

Currently when I create a Git repo and connect Test Studio it creates default branch called "master".

Would it be possible to make it consistent with good practice and Git standard and rename it to "main" in the next version of Test Studio?

Can this be added as feature request please.

Thank you,

Max

When generating videos for the test runs from a test list, the output video files uses the name of the test only. There is no indication which is the test list from which this test was executed and when having multiple runs and videos it is difficult to relate these with the generated results.

It will be useful to generate the names of the videos from a test list in a way to correspond to the test list name and particular run.

Currently the exported result contains extended details only for the failed steps. If there is a warning for a step - like the warning that the element was found only by image, this can be only seen in the Test Studio result file.

Extending the HTML exported result to show the step warning details will be very useful for anyone who review this type of result (attached in an email after a scheduled run, for example).