When recording test steps within Test Studio, elements which are interacted with are recorded within the Elements Panel and are organised by the Window Title.

If a Window has a dynamic title i.e. "PRODUCT - LOGGED IN USER J.SMITH", when we record clicking a button, the element appears in the Window "PRODUCT - LOGGED IN USER J.SMITH". So long as the test runs against this user, and therefore the window title this works ok.

Im aware you can ask Test Studio to do partial window matching as described here: https://docs.telerik.com/teststudio/troubleshooting-guide/test-execution-problems-tg/window-partial-match, however, this only works for elements already known.

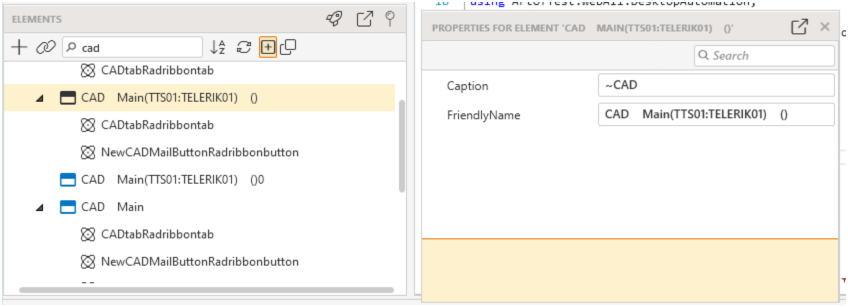

If I create a test, record the steps and set the window title to use partial matching i.e. "~PRODUCT - LOGGED IN", this works, but if you record the use of another button, Test Studio recreates the original window "PRODUCT - LOGGED IN USER J.SMITH", and you have to repeat the step again to use partial matching.

This request is to allow a drag/drop from one window into another, and to therefore avoid recreating the window title over and over again for each control you add into the element window.

In this Example, 'CAD' can be prefixed by the database name (MAIN), then the user and workstation (TTS01:TELERIK01). CAD is always there, so i use "~CAD" which works. But if interact with a control again as part of another test, its recreated in its own window folder again.

I have a number of tests scheduled to run every night.

I Selected one of the scheduled tests and made a clone from it, wich i also renamed. The cloned version was edited, half of the test cases was removed.

For the comming scheduled tests my original test with correct name is listed. When i analyzing the result it shows that only the test cases remaining in the new cloned version has been running. So for some reason it seems that wrong testlist has been choosen.

The Test Studio execution log currently generates a "Success" message and provides a file path for failure videos even when the write operation fails due to environment issues (e.g., a missing directory ).

Notes: While it is understood that the configured folder should ideally exist prior to runtime, the system should not provide "trustful" logs that contradict the actual state of the machine's filesystem.

When Daylight Savings Time ended on November 6, our scheduled tests started running an hour earlier than scheduled. I had to "edit" each schedule (without actually changing any settings) and save in order for the time in the "TimeToRun" setting in the job details file to adjust for the time change. It would be nice if this could happen automatically. Or maybe add a right-click option to for updating the time or something.

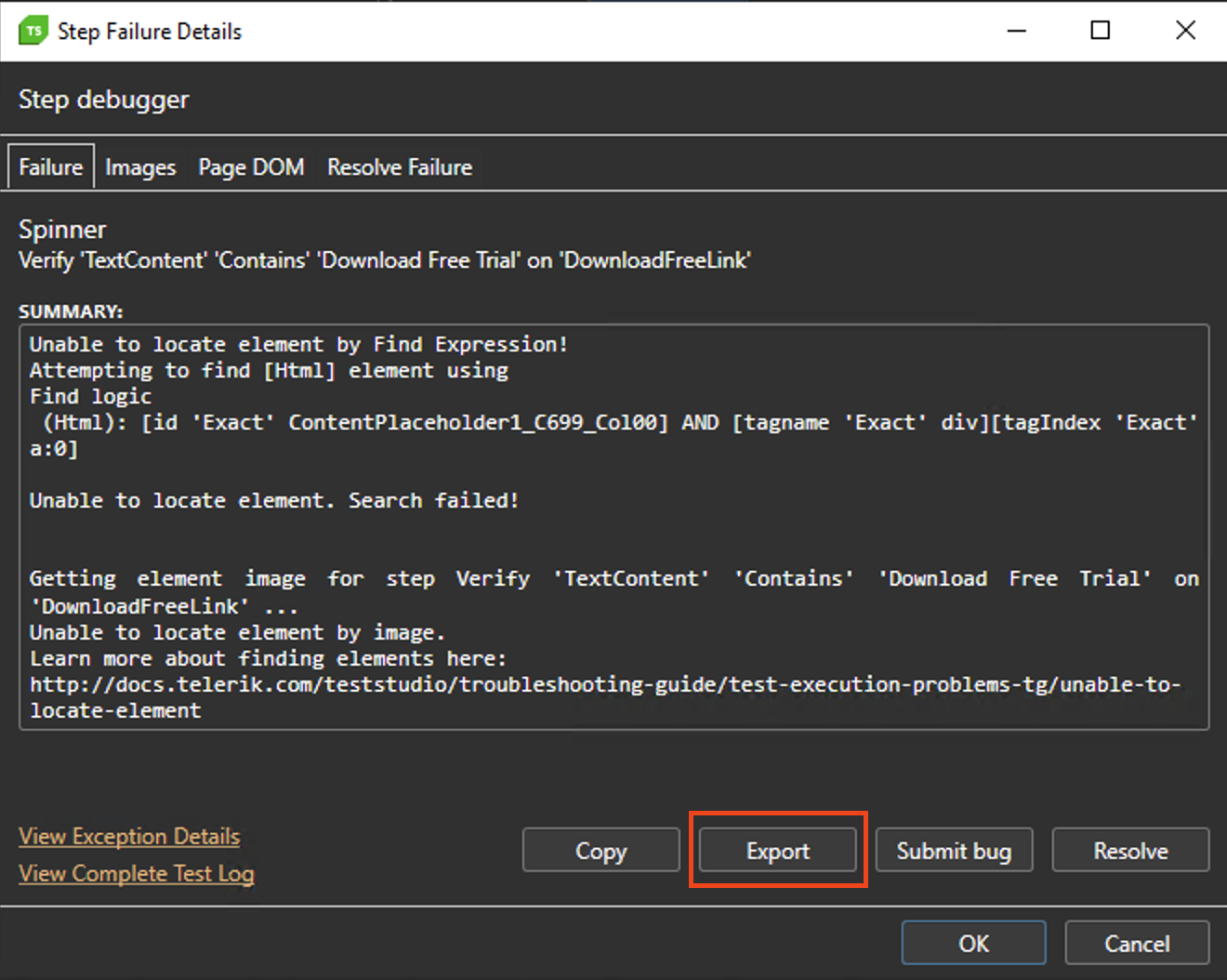

Trying to export Step Failure Details from Test Results does not export the zip file and throws an exception in the log.

As a workaround you can export Step Failure details from the Quick Execute

We would like to request official support for .NET 10 and .NET 11 in Telerik Test Studio to ensure compatibility with upcoming framework versions.

.NET 10 was announced in Nov 2025: Announcing .NET 10 - .NET Blog

The Preview for .NET 11 is available since Feb 10th. .NET 11 Preview 1 is now available! - .NET Blog

After updated telerik to a newest version, when I tried to open the details of my test step, Error “Save Project on Error” prompted and keep auto close whole app. Even when I tried to record new step it will also auto close. Most of the step that trigger auto close is like enter text, bind data etc

Conclusion my whole project cannot be executed and run anymore.

Hello,

After using the tool for a few days, I noticed that it’s currently not possible to create subfolders within main folders. It would be extremely helpful to have this feature, especially for larger websites with many pages.

In my case, I have a large number of tests, and it quickly becomes difficult to locate specific ones. Allowing subfolders would make it much easier to organize tests by page, category, or functionality/feature and to find what we need more efficiently.

Thank you for considering this request!

Best regards,

Since updating to version 2025.3.812.1 the most WPF tests are failing with the following exception.

After the update we didn't receive a message that the project is being upgraded.

Timed-out waiting for the object to become visible.

System.TimeoutException: Timed-out waiting for the object to become visible.

bei ArtOfTest.WebAii.Silverlight.VisualWait.ForNoMotion(Int32 initialWait, Int32 motionCheckInterval, Int32 timeout)

bei ArtOfTest.WebAii.Design.Execution.ExecutionUtils.WaitForNoMotionIfNeeded(AutomationDescriptor descriptor, Int32 timeout)

bei ArtOfTest.WebAii.Design.Execution.ExecutionUtils.WaitForAllElements(IAutomationHost host, AutomationDescriptor descriptor, Int32 timeout, Int32 imageSearchTimeout, Int32 imageSearchDelay, Boolean searchByImageFirst)

bei ArtOfTest.WebAii.Design.Execution.ExecutionEngine.ExecuteStep(Int32 order)

It also appears that the system does not wait for motion to complete and fails immediately. The motion timeout is currently set to default 500ms.

Currently Test Studio projects target .Net Framework 4.7.2 to 4.8.

That means our test project has to be .Net framework in order to consume the framework dlls[ArtOfTest.WebAii, ArtOfTest.WebAii.Design].

We are really looking to migrate our test project to .Net core in-order to align with our application under test (that is .Net core).

Chrome and Edge version 139 cannot record or execute Test Studio tests.

Steps to reproduce for test execution:

- Open a web test and execute it.

Expected: The test to be executed as expected.

Actual: The test stops at the Navigate step and fails with the following exception in the test execution log:

Failure Information: ~~~~~~~~~~~~~~~ Wait for condition has timed out InnerException: System.TimeoutException: Wait for condition has timed out at ArtOfTest.Common.WaitSync.CheckResult(WaitSync wait, String extraExceptionInfo, Object target) at ArtOfTest.Common.WaitSync.For[T](Predicate`1 predicate, T target, Boolean invertCondition, Int32 timeout, WaitResultType errorResultType) at ArtOfTest.Common.WaitSync.For[T](Predicate`1 predicate, T target, Boolean invertCondition, Int32 timeout) at ArtOfTest.WebAii.Core.Browser.WaitUntilReady() at ArtOfTest.WebAii.Core.Browser.ExecuteCommand(BrowserCommand request, Boolean performDomRefresh, Boolean waitUntilReady) at ArtOfTest.WebAii.Core.Browser.ExecuteCommand(BrowserCommand request) at ArtOfTest.WebAii.Core.Browser.InternalNavigateTo(Uri uri, Boolean useDecodedUrl)

Steps to reproduce for test recording:

- Open a web test and start recording.

Expected: The browser to navigate to the selected page and to continue with the recorder attached.

Actual: The browser is launched but stays on about:blank and fails with the following exception in the application log:

EXCEPTION! (see below)

Outer Exception Type: ArtOfTest.WebAii.Exceptions.ExecuteCommandException

Message: ExecuteCommand failed!

InError set by the client. Client Error:

Protocol error (Page.navigate): Invalid referrerPolicy

BrowserCommand (Type:'Action',Info:'NotSet',Action:'NavigateTo',Target:'null',Data:'https://telerik.com/',ClientId:'BFD7ED2D99EFCBA532CA527E680A6AC8',HasFrames:'False',FramesInfo:'',TargetFrameIndex:'-1',InError:'True',Response:'Protocol error (Page.navigate): Invalid referrerPolicy')

InnerException: none.

I started watching the first video - Use Test Lists in Test Studio - YouTube and the sound settings are way way too low. I am at 100% volume. I even tried on a different computer with a different computer with the same problem happening. It was a bit better there, but as the comment on the video says, "if you had the volume up at the music volume at the end then you would not have a problem. I hope you other tutorial videos are better.

is there any option to change this ugly user interface in test studio (and its plugin to Visual Studio too) where list of available coded methods is very hard to read? There is huge space available on the screen to make this dropdown very wide (auto-scaling to see full names is good idea here), but despite that this list is very narrow, and then reading & selecting proper method is extremally uncomfortable for developer.

regards

Michal

Trying to run tests separately. User must login before running additional test. Login test works. When running additional tests, cookies/session is forgotten.

Test Sequence:

login: creates asp.net session cookies

select company: depends on asp.net session cookies and create a company cookie

select employee: depends on asp.net session cookie and company cookie

How do I keep the cookies and/or keep the browser open between running individual tests ?

Steps to reproduce:

- Record the steps to select time from a radDateTimePicker component.

- The recorded step is 'datetimepickerclock: select time ''.'

- Convert it to code.

Expected: The code is compiled and executed as expected.

Actual: The converted code produces compilation error:

[ Compiler ] 15:57:58 'ERROR' > C:\TestProject1\WPFTest.tstest.cs(55,104) : error CS1061: 'FrameworkElement' does not contain a definition for 'SelectTime' and no accessible extension method 'SelectTime' accepting a first argument of type 'FrameworkElement' could be found (are you missing a using directive or an assembly reference?) 15:57:58 'INFO' > Build Failed

Workaround: Cast the element like this:

Applications.WPF_Demosexe.Telerik_UI_for_WPF_Desktop_Examples.PARTClockDatetimepickerclock.CastAs<Telerik.WebAii.Controls.Xaml.Wpf.DateTimePickerClock>().SelectTime("1:00 PM");

When start recording against specific app using extensionless browser, the browser crashes and we are not able to proceed with the recording. The error displayed in the browser console and in Test Studio log is :

Page Error: Error: Bootstrap tooltips require Tether (http://tether.io/)