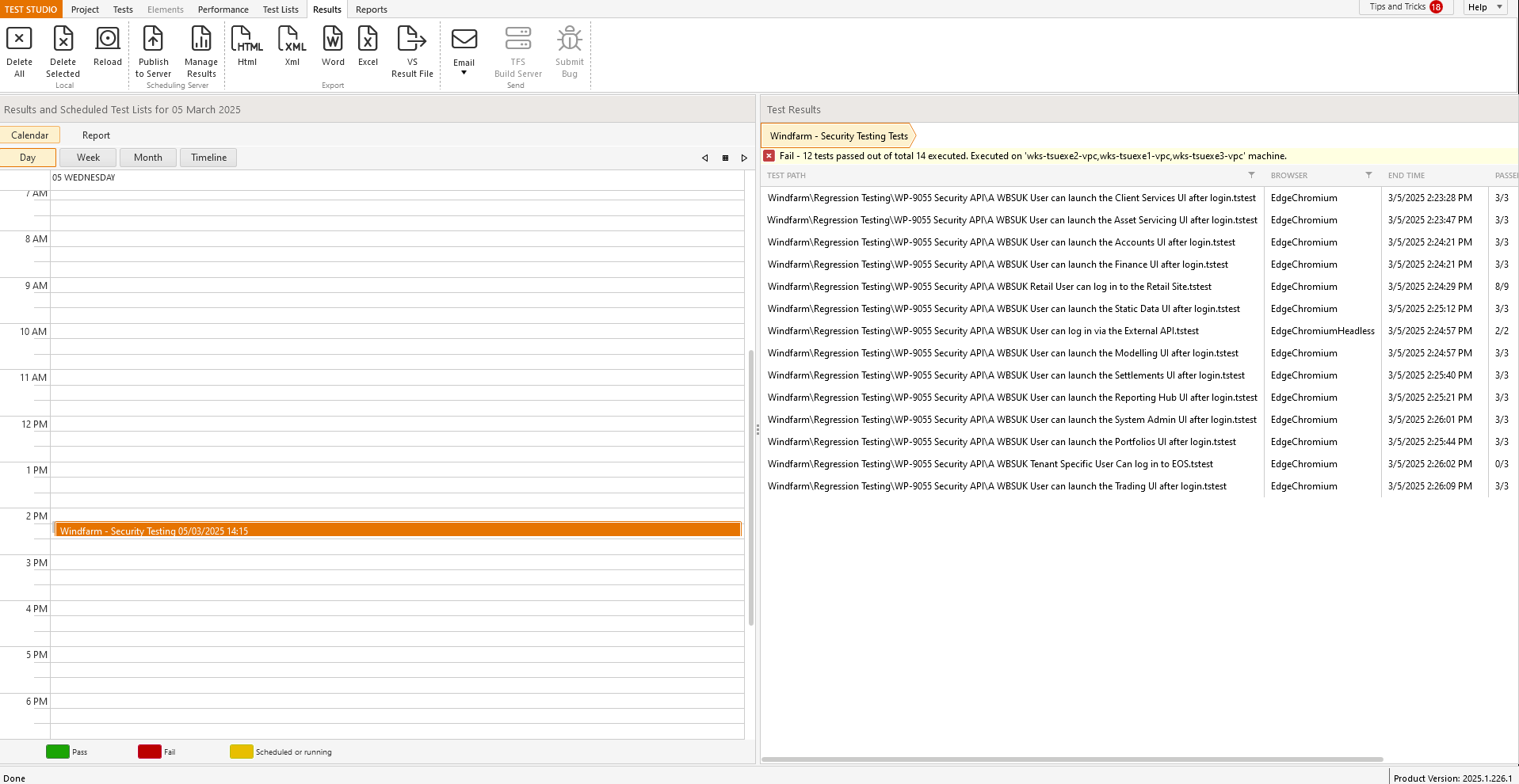

Currently the results view is divided exactly 50/50 but this seems to be a waste as the view on the left does require this much screen real estate; see

Can this beadjusted so that more of the right panel is visible as default to reduce the need to always manually adjust every time i want to view the results.

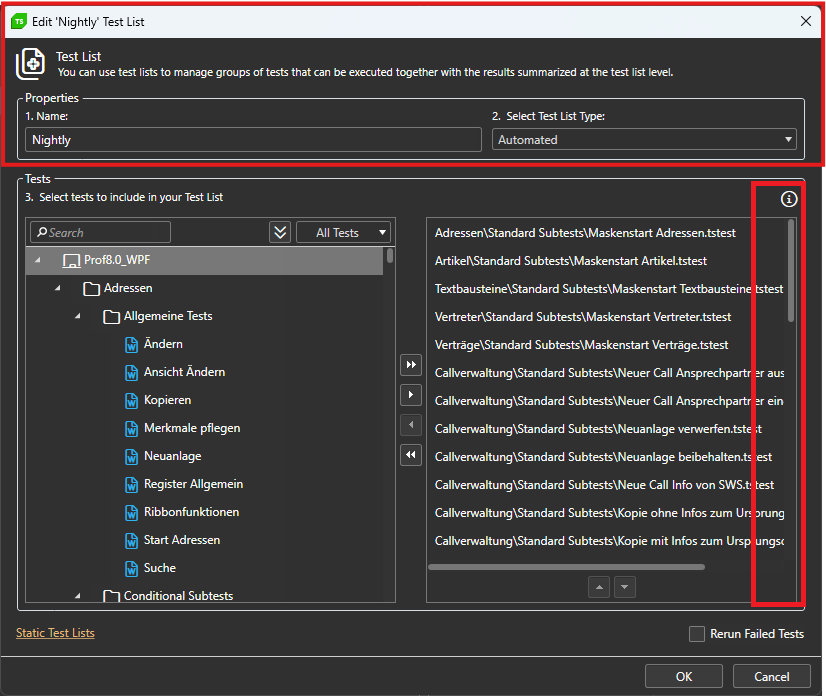

The window for sorting tests while editing the testlist can't be maximized.

Long testpaths can't be read properly and the trashbin for deleting the test can only be accessed via scrollbar.

While we have attempted workarounds, such as capturing WebSocket traffic using Fiddler, these solutions do not yield the expected results within Test Studio. The inability to accurately simulate WebSocket communication during load testing compromises the reliability of our performance assessments.

Adding WebSocket support would greatly enhance the functionality of Test Studio and better serve the needs of developers working with modern real-time applications.

Once I have the browser up and running I don't need to start from 'Log onto URL'. Hence my high partial test run use.

I may be only running part of a test, but when the test is 500 steps its cumbersome to highlight the section I want to run, and where to stop.

It would be much easier to set a breakpoint, run the part of the test I need, and stop midway.

Every day, and usually many times a day I forget to turn it off and begin recording random steps. Then I have to go find them and delete them.

Once Annotations is on leave it on similar to the Debugger.

And with it leave the ms where it was.

Every time I log on it takes me a few minutes to realize Annotations is off.

Edit in Code:

If no change is made to the file do not convert it to custom code.

I believe the staff would get fewer help tickets caused by 'coded' steps.

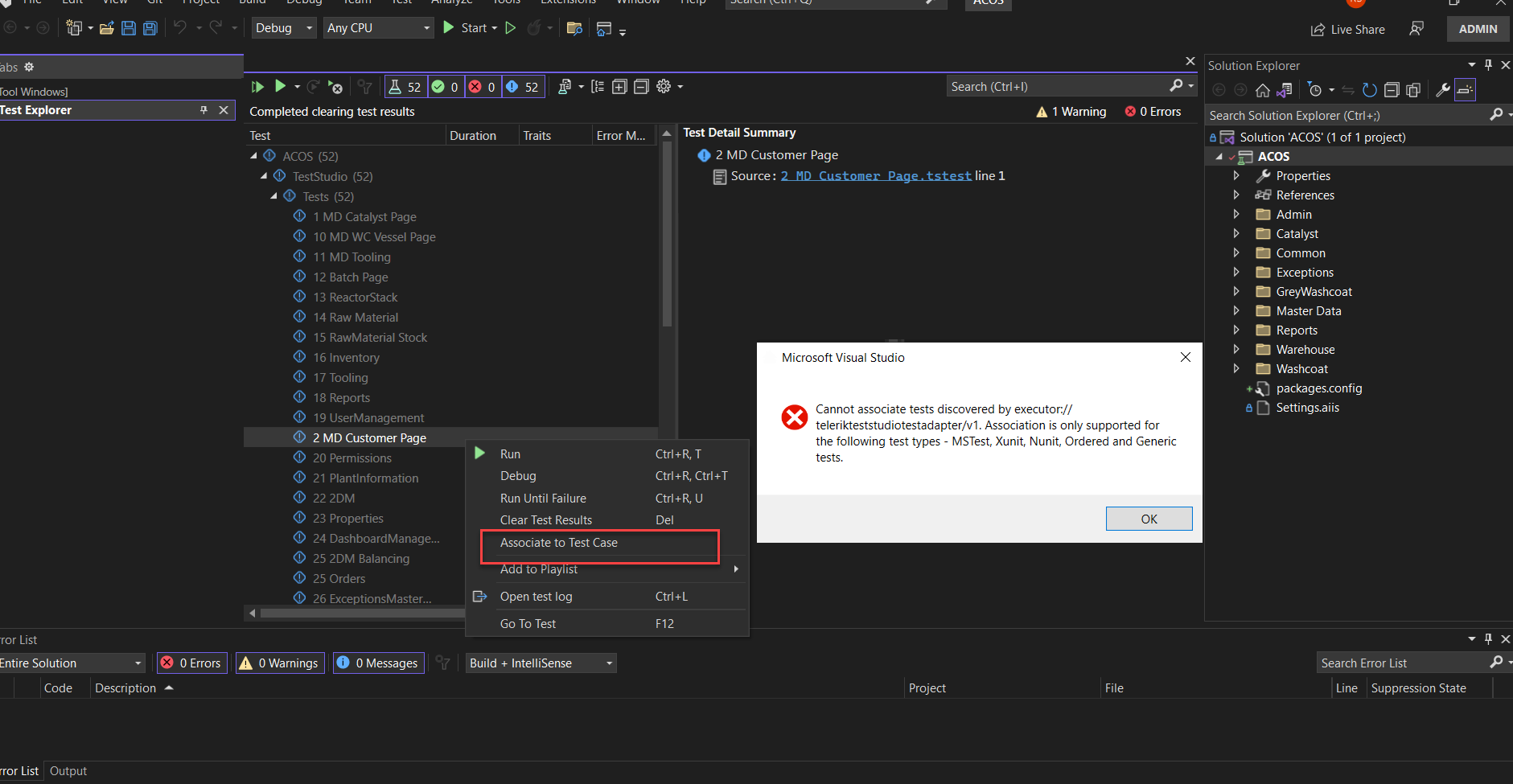

Visual Studio and Azure pipelines - they only support unit tests to be associated with the test cases and not Test Studio tests. there should be some easy way to convert studio tests to unit tests(nUnit or Junit etc.). Else Azure CI/CD DEVOPS is incomplete.

There are no built-in translators for Kendo React controls.

It will be useful to revisit the story and evaluate the need of such translators.

There is no direct method in the Testing Framework, which can be used to scroll the RadGanttView control in WPF app.

It will be useful to explore such implementation.

It would be nice if there was a way to avoid simulating real typing for a search box. There is no option to enable/disable it, but it is clearly using this behavior. I've tried a workaround of entering text directly in the input element, but it doesn't seem to register it when this technique is used. I don't see how a textbox can be made to work without this behavior but a search box cannot.

Simulating real typing tends to be the most fragile part of our tests, and all we really need is to enter text and then search. It also slows down the tests quite a bit vs. just setting the text directly.

Tests that have Not Completed tests, shouldn't list the overall test as Pass or Fail....rather it should set the overall run as "not complete"

In addition - when loading not completed tests within test runs, they are just shown as fail with no background and white text. The run is listed as pass if there are no fails but existing "not completes".

Recommendation to add Not Complete as overall result in addition to individual tests that are no in a passed or failed state

Currently Test Studio updates only the necessary tests and resources to the storage server. When the test execution starts on the execution server, Test Studio will download everything available in the storage server.

Please check how this can be optimized to ensure that there are all the necessary resources for the test execution.

I think it would be neat to be able to view / edit markdown files within a project within the Test Studio application. Being able to see a markdown file in the project list, and have them open in a editor tab so you can review / make edits.

For example I create markdown files that have high level summary's of what each test completes, or potential helpful debugging information on a particular test.

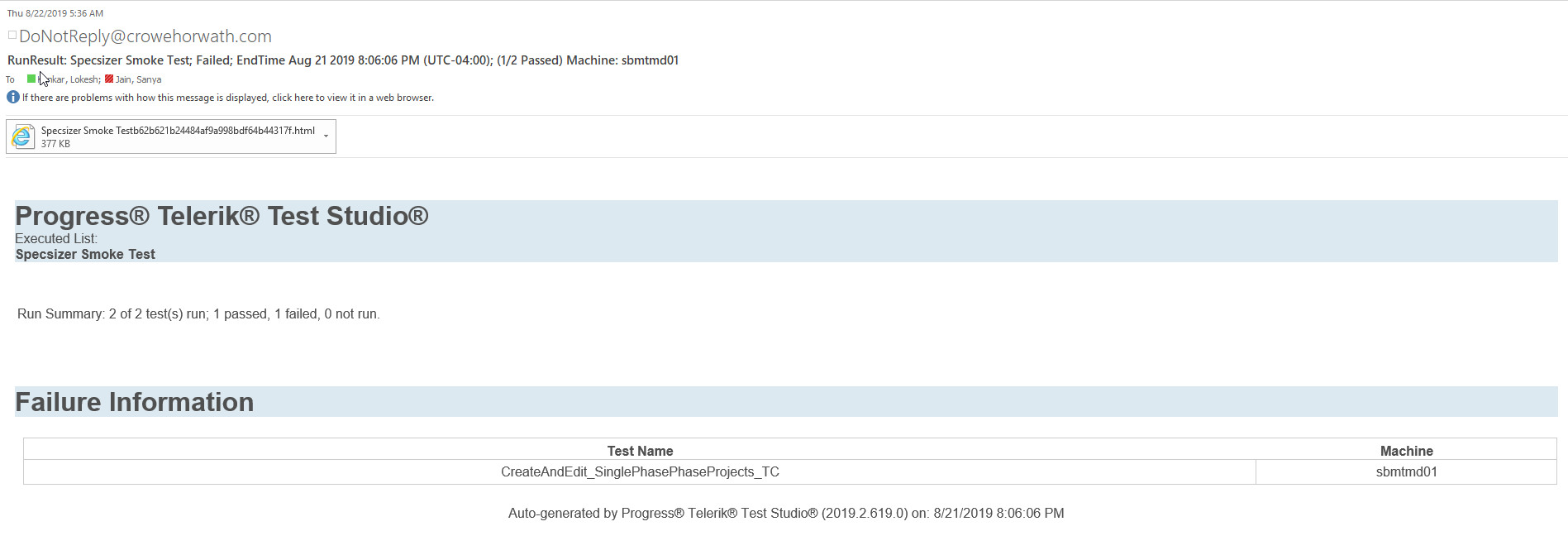

Email body of report is not detailed just like attached HTML. So that leadership team can view the failure steps from the email without downloading the attachment

<Polygon

Points="16, 32 32, 32 24, 18"

Visibility="Visible"

ToolTipService.ShowDuration="360000" HorizontalAlignment="Right" Width="32">

<Polygon.Fill>

<SolidColorBrush Color="Aqua"/>

</Polygon.Fill>

<Polygon.ToolTip>

<StackPanel>

<TextBlock Text="Foo"/>

<Ellipse Width="20" Height="20" Fill="Pink"/>

<TextBlock Text="Bar"/>

</StackPanel>

</Polygon.ToolTip>

</Polygon>