When Daylight Savings Time ended on November 6, our scheduled tests started running an hour earlier than scheduled. I had to "edit" each schedule (without actually changing any settings) and save in order for the time in the "TimeToRun" setting in the job details file to adjust for the time change. It would be nice if this could happen automatically. Or maybe add a right-click option to for updating the time or something.

We would like to request official support for .NET 10 and .NET 11 in Telerik Test Studio to ensure compatibility with upcoming framework versions.

.NET 10 was announced in Nov 2025: Announcing .NET 10 - .NET Blog

The Preview for .NET 11 is available since Feb 10th. .NET 11 Preview 1 is now available! - .NET Blog

Hello,

After using the tool for a few days, I noticed that it’s currently not possible to create subfolders within main folders. It would be extremely helpful to have this feature, especially for larger websites with many pages.

In my case, I have a large number of tests, and it quickly becomes difficult to locate specific ones. Allowing subfolders would make it much easier to organize tests by page, category, or functionality/feature and to find what we need more efficiently.

Thank you for considering this request!

Best regards,

Currently Test Studio projects target .Net Framework 4.7.2 to 4.8.

That means our test project has to be .Net framework in order to consume the framework dlls[ArtOfTest.WebAii, ArtOfTest.WebAii.Design].

We are really looking to migrate our test project to .Net core in-order to align with our application under test (that is .Net core).

is there any option to change this ugly user interface in test studio (and its plugin to Visual Studio too) where list of available coded methods is very hard to read? There is huge space available on the screen to make this dropdown very wide (auto-scaling to see full names is good idea here), but despite that this list is very narrow, and then reading & selecting proper method is extremally uncomfortable for developer.

regards

Michal

Test Studio built-in workflow for PDF validation requires the file to be downloaded and then opened in new tab.

Allow the opposite scenario where the user wants to directly open the PDF file in the browser instead.

Note: That way the opened file will not be readable from within Test Studio and only connecting to and closing the new tab will be supported.

------------------------------------------------------------

Failure Information:

~~~~~~~~~~~~~~~

ExecuteCommand failed!

InError set by the client. Client Error:

Protocol error (Input.dispatchKeyEvent): Invalid 'text' parameter

BrowserCommand (Type:'Action',Info:'NotSet',Action:'RealKeyboardAction',Target:'null',Data:'keyDown#$TS$#INVIO',ClientId:'xxx',HasFrames:'False',FramesInfo:'',TargetFrameIndex:'-1',InError:'True',Response:'Protocol error (Input.dispatchKeyEvent): Invalid 'text' parameter')

InnerException: none.

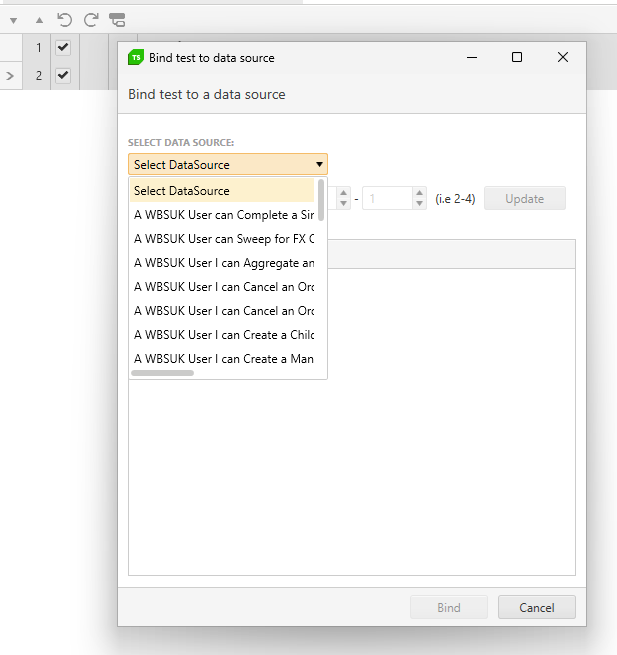

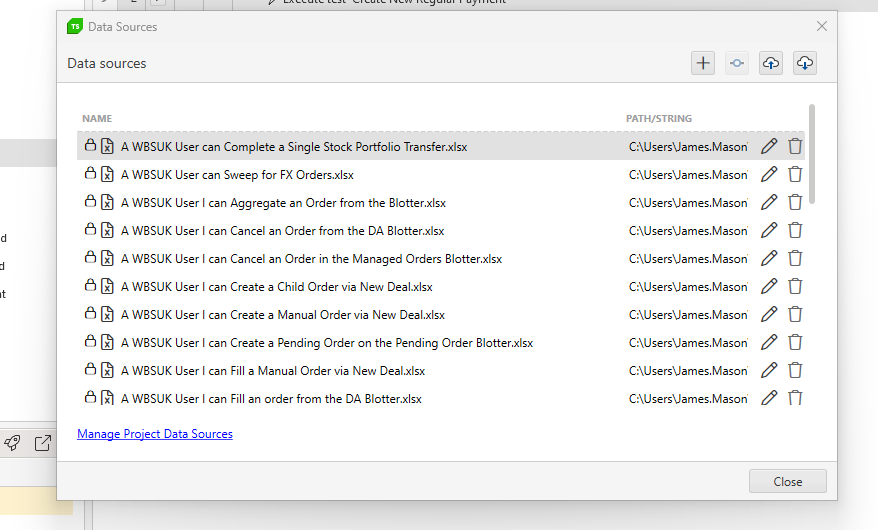

Currently, with lots of bound data sheets the visibility when binding them is poor. See

Can this be improved by widending the view and also making this a search rather than a drop down as the selection will likely grow greatly making it even harder to locate the file i need.

In addition, when managing the data, there should also be a search to filter the data source i want to edit / manage

Hello people at Telerik,

Is it possible to Data Bind a Radmaskedtextinput using the Test Studio User Interface?

In more detail:

I am automating a WPF application (Data Driven).

I want to fill out forms with multiple types of input fields (like Date, Comboboxes and also Radmaskedtextinput).

I have bound my test to an Excel file.

For fields like comboboxes and dates I am able to select the data to be used by clicking on the button "Data Binding" in a test step.

For me, this is "using the Test Studio Interface". (See Databind_combobox)

For "Radmaskedtextinput" type fields I am not able to do this. Clicking on the dropdown arrow at the right of a recorded test step shows nothing. (See Databind_radmaskettextinput)

Workaround:

I am able to data bind the step by converting the teststep to a coded step and changing the argument of the TypeText function. (see Databind_Code). This works, but selecting through the test Studio UI seems easier.

Thanks in advance!

With friendly regards,

Robert

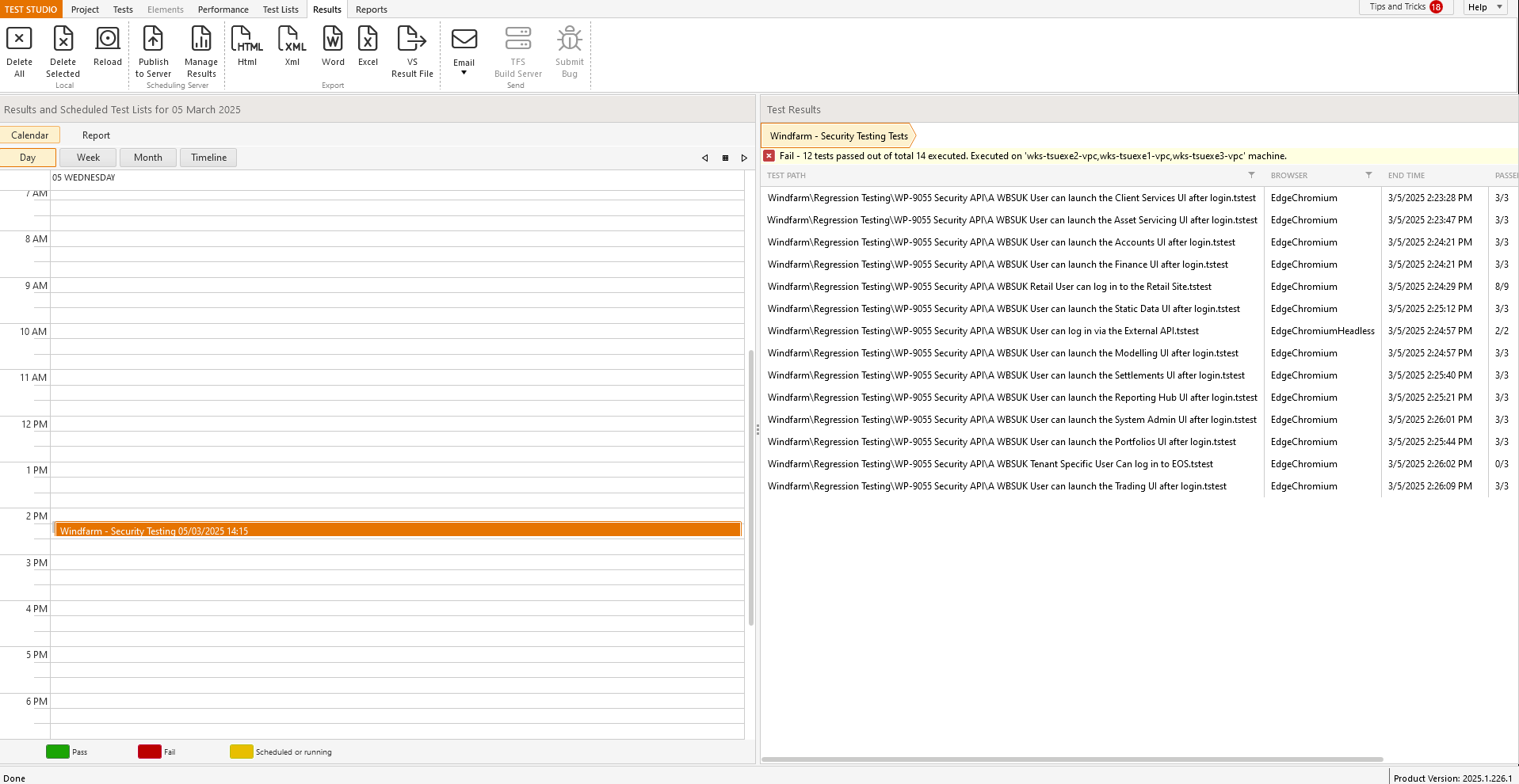

Currently the results view is divided exactly 50/50 but this seems to be a waste as the view on the left does require this much screen real estate; see

Can this beadjusted so that more of the right panel is visible as default to reduce the need to always manually adjust every time i want to view the results.

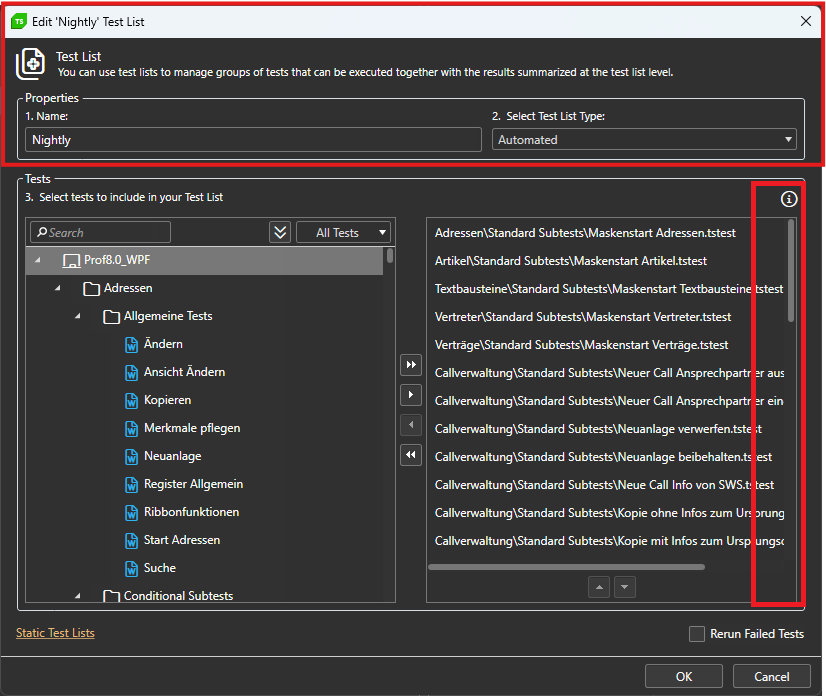

The window for sorting tests while editing the testlist can't be maximized.

Long testpaths can't be read properly and the trashbin for deleting the test can only be accessed via scrollbar.

Steps to reproduce:

- Schedule a test list with 'Rerun failed tests' option set to true.

- The project to upload to Storage needs to produce a compilation error upon execution.

- Check the test results.

Expected: The test list run does not execute any of the tests due to the compilation error and logs a failed test list result.

Actual: The test list run does not execute any of the tests due to the compilation error and logs a passed test list result.

While we have attempted workarounds, such as capturing WebSocket traffic using Fiddler, these solutions do not yield the expected results within Test Studio. The inability to accurately simulate WebSocket communication during load testing compromises the reliability of our performance assessments.

Adding WebSocket support would greatly enhance the functionality of Test Studio and better serve the needs of developers working with modern real-time applications.

Currently Test Studio Execution extension allows to get the duration only of the overall test in the OnAfterTestCompleted() method.

Extend the functionality and include the option to get the duration of each separate executed step.

Dear Telerik team,

we lately encountered an inconvenience in using ArtOfTest.WebAii.Win32.SaveAsDialog.CreateSaveAsDialog(params).

The issue occurs in the SaveAsDialog.Handle() method. In there the keyboard input uses fixed parameters for delay and hold: _desktopObject.KeyBoard.TypeText(_filePath, 50, 50, supportUnicode: true);

This slows our test unnecessarily down, so it would be desirable to either change the input parameters to a reasonably short time (Why not 0,0?) or give the developer the option to manually change the parameters, since there is no need for these delays in automated tests.

Best regards

Current Test Studio wouldn't prompt Save dialog when close unsaved test case file. User may lost track for changed file.

Request when close any unsaved file on Test Studio, it should prompt user a Save Dialog, let user notice and decide to save.

Current test studio would auto remove element if not used by any test cases, without user notification.

For real world scenario, those element may needed in later test cases. It is a nightmare if an element not found when needed. Also those element may used in custom code file.

Request to enhance Test Studio to let user decide which element to removed. Yours can highlight or change font of unused elements, so that user notified and manage it after that.