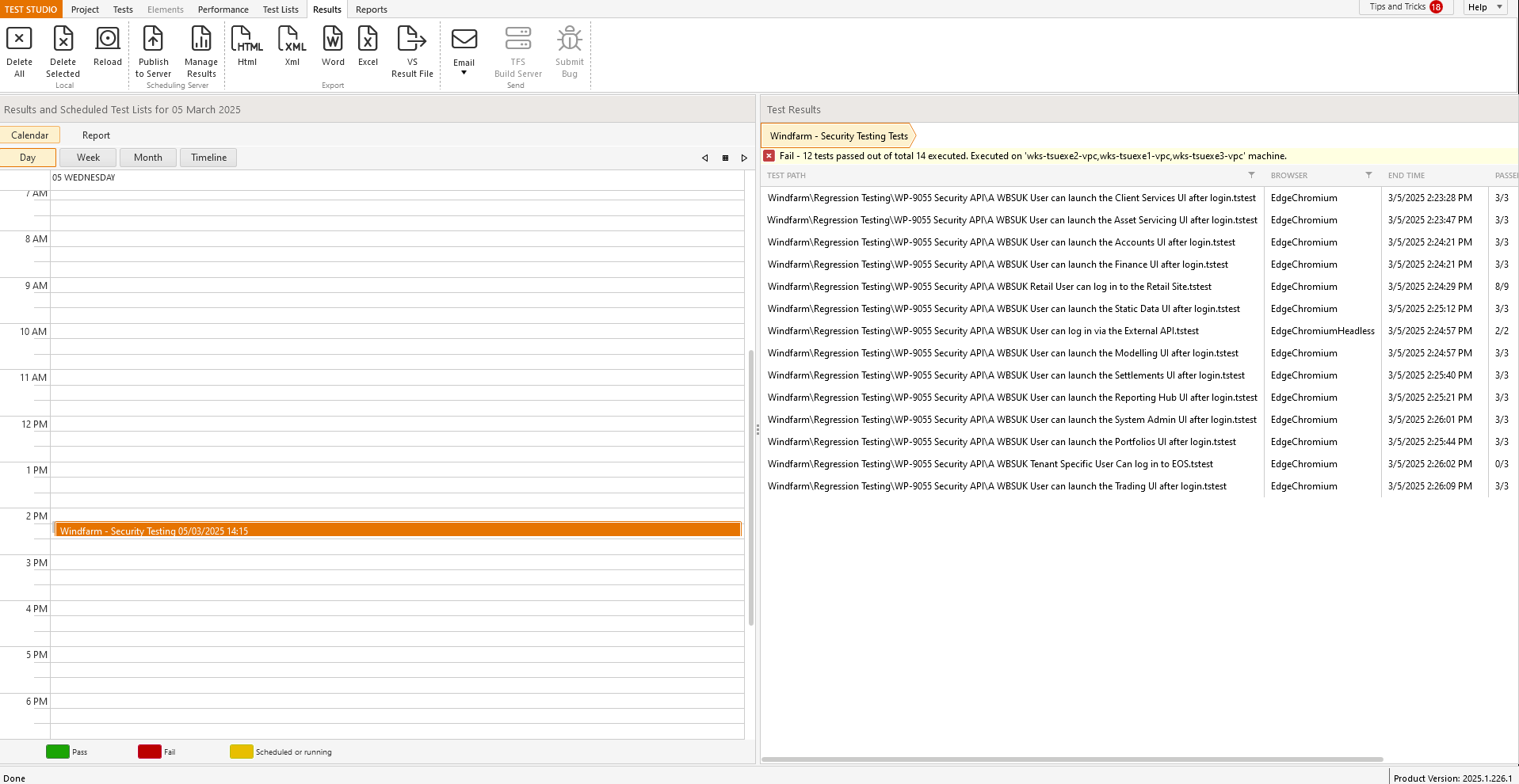

Currently the results view is divided exactly 50/50 but this seems to be a waste as the view on the left does require this much screen real estate; see

Can this beadjusted so that more of the right panel is visible as default to reduce the need to always manually adjust every time i want to view the results.

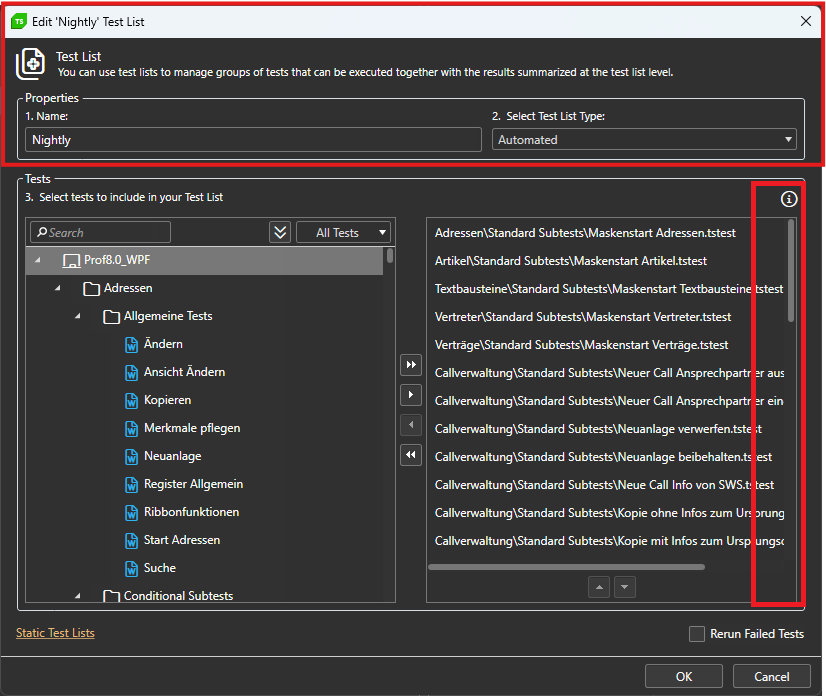

The window for sorting tests while editing the testlist can't be maximized.

Long testpaths can't be read properly and the trashbin for deleting the test can only be accessed via scrollbar.

Dear Reader,

Having an existing test with several test steps recorded via the recorder while traversing a web page.

With that set of recorded steps, now saved. There is need to introduce a logic condition. The "STEP BUILDER" is used to insert a "Condition" of "if...else" via the button of "Add Step" this is successful.

The said "Add Step", "step" is added and the recorded test has the additional step, that now needs to be given the condition for the "if...else" clause. Opening up the dropdown there are a list in the dropdown of the "IF" statement, which is all the available steps in the test. Locating one of these steps is easy, what is not that simple to get done is the process of selecting that step to cause the condition to be accepted by the "IF" statement's condition. As this now requires that the opened dropdown list for the item that is desired to be selected, requires that the mouse must be clicked on in rapid succession in excess of five or sometimes seven "left mouse clicks", perhaps even more to get the click to register!!! The success of the selection is a surprise every time it happens as it seems like it is rather odd that a single or even double click does not do what should be reasonable behaviour.

I trust the above is sufficient to replicate the UX and allow a fix to be achieved.

Kind regards.

While we have attempted workarounds, such as capturing WebSocket traffic using Fiddler, these solutions do not yield the expected results within Test Studio. The inability to accurately simulate WebSocket communication during load testing compromises the reliability of our performance assessments.

Adding WebSocket support would greatly enhance the functionality of Test Studio and better serve the needs of developers working with modern real-time applications.

Once I have the browser up and running I don't need to start from 'Log onto URL'. Hence my high partial test run use.

I may be only running part of a test, but when the test is 500 steps its cumbersome to highlight the section I want to run, and where to stop.

It would be much easier to set a breakpoint, run the part of the test I need, and stop midway.

Every day, and usually many times a day I forget to turn it off and begin recording random steps. Then I have to go find them and delete them.

Once Annotations is on leave it on similar to the Debugger.

And with it leave the ms where it was.

Every time I log on it takes me a few minutes to realize Annotations is off.

Edit in Code:

If no change is made to the file do not convert it to custom code.

I believe the staff would get fewer help tickets caused by 'coded' steps.

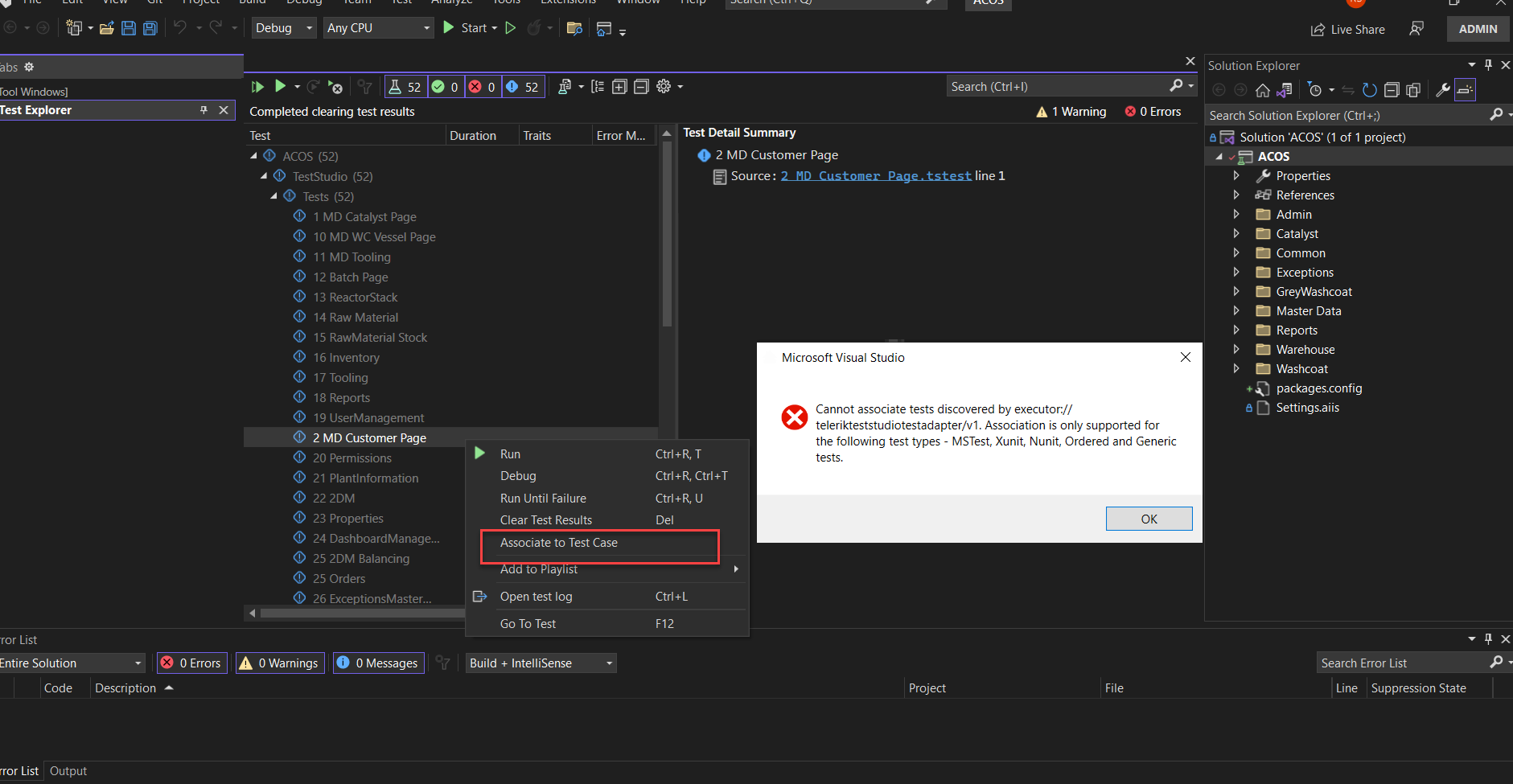

Visual Studio and Azure pipelines - they only support unit tests to be associated with the test cases and not Test Studio tests. there should be some easy way to convert studio tests to unit tests(nUnit or Junit etc.). Else Azure CI/CD DEVOPS is incomplete.

A project's BaseURL appends a slash after the URL is entered into a Project's settings. This is not idea in environments where you URL's are parsed like the following: 1) Protocol: http:// 2) Environment: dev, uat or blank 3) Sub Domain: admin, shopping 4) Domain: mycompany.com So when the base URL for the dev test list is "http://dev" for example. It navigates to http://dev/shopping.mycompany.com. It would be preferable in some cases if the test would navigate to http://devshopping.mycompany.com. To achieve that, there needs to be a method to remove the appending slash from the Base URL.

As a workaround you should use quick execute button or F5

When I run my test that requires a button click event it works fine. However, when that same test is run from test list, it does not - the web page remains the same, with the visible button not being clicked.

Weird, this only seemed to happen after upgrading to 2022.1.215.0

Steps to reproduce:

- Record a sample test on https://demos.telerik.com/kendo-ui/textbox/index.

- Enter name in the KendoInput field.

- Add a step to verify the value of the KendoInput through the highlighting menu and execute these recorded steps.

Expected: Successful execution.

Actual: The test fails on the verification step and reports that the value of the KendoInput is null.

Additional note: If the verification is added for the input control (instead of using the KendoInput), it is executed as expected.

A specific coded steps test cause Chrome to be closed when trying to perform a partial run using Run->To Here.

The coded step before the navigate one is starting a proxy to log the traffic.

Sample project is provided internally.

There are no built-in translators for Kendo React controls.

It will be useful to revisit the story and evaluate the need of such translators.